Astronomers are embarrassed and perplexed. They study the cosmos with ever more powerful telescopes, but rather than building up a more complete inventory of what the cosmos contains, they confront ever more insistent evidence for material that is invisible â neither emitting light nor absorbing it. Most of the stuff that governs the large-scale universe, and sculpts the structures in it, is very different from the atoms that the shining stars and nebulae are made of.

But astronomers shouldnât have been surprised at this realization. There are many analogies closer to home. For instance, NASAâs spacecraft have given us beautiful images of the Earth at night, reveling the bright lights of the worldâs cities, fires from gas and oil wells in the Middle East, and scattered light from small settlements. But if aliens, viewing Earth from afar, had just these night-time images, theyâd have an incomplete and distorted view of our planet.

Likewise, the ancients, looking up at the sky, would have found it hard to believe that huge cumulus clouds are actually far lighter and less substantial than the invisible air that sustains them.

Astronomers viewing the wider cosmos realize theyâre in the same predicament. It seems that galaxies, and even entire clusters of galaxies, are held together by the gravitational pull of about five times more material than we actually see.

Many lines of evidence support this conclusion. I’ll mention just two:

The first comes from disc galaxies, like our own Milky Way or Andromeda. Stars and gas circle around the central hub of such galaxies, at a speed such that centrifugal forces balance the gravitational pull towards the centre. This is analogous — though on a far larger scale — to the way the Sun’s gravitational pull holds the planets in their orbits. If the stars and gas in the outermost parts were feeling the gravity of the material we see, then the further out they were, the slower they would be moving — for the same reason that Pluto is moving more slowly around the Sun than the Earth is. But that’s not what is found: stars and gas clouds at different distances from the galaxy all orbit at more or less the same speed. If, in our own Solar System, Pluto were moving as fast as the Earth, we’d have to conclude that there was a shell of material outside the Earth’s orbit but within Pluto’s. Likewise, the high speed of this outlying material tells us that there’s more to galaxies than meets the eye. The entire luminous galaxy — an assemblage of stars and glowing gas — must be embedded in a dark halo, several times heavier and more extensive.

And there’s pervasive dark matter on still larger scales. The Swiss-American astronomer Fritz Zwicky argued in the 1930s that the galaxies in a cluster would disperse unless they were restrained by the gravitational pull of dark matter. He proposed that gravitational lensing — the bending by gravity of light rays from objects behind it — could reveal dark matter’s presence. This technique has now, many decades later, borne fruit, and it is sad that Zwicky didn’t live long enough to see images like the one below.

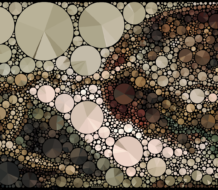

It depicts a big cluster of galaxies about a billion light years away. The image also shows numerous faint streaks and arcs: each is a remote galaxy, several times farther away than the cluster itself, whose image is, as it were, viewed through a distorting lens. Just as a pattern on background wallpaper looks distorted when viewed through a curved sheet of glass, the gravity of the cluster of galaxies deflects the light rays passing through it. The visible galaxies and gas in the cluster contribute far less light-bending than is needed to produce these distorted images — evidence that clusters, as well as in individual galaxies, contain much more mass than we see.

The main case for dark matter rests on applying Newton’s law of gravity on scales millions or even billions of times larger than our Solar System, which is of course the only place where it has been reliably tested. It is proper to be cautious about such a huge extrapolation: indeed, some have suspected that we are indeed being misled, and that gravity grips more strongly at large distances than standard theories predict. In this context the gravitational lensing is important because it corroborates the other evidence, yet it is based on rather different physics — Einstein’s rather than Newton’s.

We shouldnât demur at the prospect that most of the stuff in the universe may be dark: why should everything in the sky shine, any more than everything on Earth does? But what could the dark matter be? The favoured view is that it consists of a swarm of particles, surviving from the ‘big bang,” which have so far escaped detection because they have no electric charge, and because they pass straight through ordinary material with barely any interaction. This hypothesis gains strong support from computer simulations of how galaxies form. Computer simulations based on the so-called “cold dark matter” hypothesis have been very successful in understanding how galaxies evolve and are clustered. Without the dark matter, itâs near-impossible to reconcile the existence of galaxies with what we know about the early universe.

Physicists have theorised about many types of particlea that could have been created in the ultra-hot initial instants after the Big Bang, and could survive until the present. Thousands of these particles could be hitting us every second, but they almost all pass straight through us, and through any laboratory. Sometimes one of them collides with an atom, however, and sensitive experiments might detect the consequent recoil when this happens within (for instance) a lump of silicon. Several groups around the world have taken up this challenge. It requires delicate equipment, cooled down to temperatures near absolute zero, and deployed deep underground to reduce the background signal from cosmic rays and so forth.

One of the experimental difficulties is that other kinds of particles (for instance, decay products from radioactivity in rock) can cause similar signals. But genuine dark matter would have a distinctive signature, which would tell us that it came from our Galaxy and not just from the Earth. The Sun is moving steadily through the swarm of particles that make up our galactic halo. But the Earth is moving around the Sun; in June its speed relative to the galactic halo adds to that of the Sun; six months later it subtracts from it. A genuine signal from dark matter should consequently show this seasonal modulation. There have been claims of such detection. But recent results from a much more sensitive experiment called LUX revealed nothing. This doesnât rule out these particles, but is starting to constrain their properties. Dedicated scientists will have to improve their techniques further, and stay down their tunnels and mineshafts for a few years more before detecting an unambiguous signal. But even then success isn’t assured.

There are other quite different techniques. Dark matter particles in space might occasionally collide with their antiparticles, and produce gamma rays or positrons that could be detected by instruments in space. Here again there is no form evidence. Another possibility is that new types of particles, candidates for dark matter, could be created in accelerators like the LHC in Geneva.

The intellectual stakes are high. Dark matter is the No. 1 problem in astronomy today, and it ranks high as a physics problem too. If we could solve it — and I’m optimistic that we will within the next decade — we would know what our universe was mostly made of; and we would discover, as a bonus, something quite new about the microworld of particles.

Moreover, the dark matter affects the cosmic long-range forecast — how the universe will be expanding tens of billions of years hence. However, even when its gravitational pull is added to that of âluminousâ matter, the total yields only 30 percent of the “critical density”which would be required to overwhelm the kinetic energy and bring the cosmic expansion to an eventual halt. But it would be expected to cause the expansion to gradually slow down.

But in 1998, cosmologists had a big surprise. The expansion of the universe is not slowing down at all, but speeding up. On the cosmic scale gravitational attraction is overwhelmed by a mysterious new force latent in empty space which pushes galaxies away from each other. This force, sometimes called “dark energy,” isnât important within galaxies and clusters â nor for their formation and structure. But this evidence for accelerating cosmic expansion presages a long-range forecast of an even colder and emptier universe. Nearly all the galaxies we can now observe will eventually become so redshifted that they disappear from view â rather like what happens when something falls into a black hole. Only the remnants of our Galaxy, Andromeda and a few of their smaller neighbours will remain in view.

Iâm much more pessimistic at our prospects for tackling the riddle of dark energy. This will have to await new breakthroughs in understanding the bedrock nature of space itself. A generic feature of most theories that aim to unify gravity and the quantum principle is that space has a “grainy” structure and complex texture, but on a scale a trillion trillion times smaller than atoms â far beyond the capacity of any foreseeable experiments to probe directly.

We’re reconciled to the post-Copernican idea that we don’t occupy a central place in our universe. But now our cosmic status must seemingly be demoted still further. Particle chauvinism has to go: we’re not made of the dominant stuff in the universe. We, the stars, and the visible galaxies are just traces of sediment — almost a seeming afterthought — in the cosmos: entities quite different (and still unknown) controlled the emergence of its present structure, and will determine its eventual fate.

Discussion Questions:

1. How much problematic evidence is needed before it’s appropriate to

jettison or modify well-established models or hypotheses?

2. How much of cosmology should be regarded as an “environmental” rather

than “fundamental” science?

3. What are the odds on developing a theory that will clarify the nature

of “dark energy”?

Discussion Summary

In the article that introduced this discussion, my focus was on phenomena that are no longer controversial – the existence of dark matter, and of a mysterious repulsive force latent in empty space. This progress is owed to powerful telescopes, on the ground and in space. But as always in science, each advance brings into focus some new questions that couldn’t previously have even been posed. In particular, we don’t know why our universe was “set up” with these particular ingredients – even though we can trace cosmic history back to a hot dense “beginning” nearly 14 billion years ago.

But I’d now like to close by raising two speculative issues. First, how close we are to arriving at a theory that explains these (and other) cosmic mysteries? And second, can we, as humans, expect ever to understand our cosmos and the complexities within it?

We’ve known for a long time that, when confronting the overwhelming mystery of what banged in the Big Bang and why it banged, Einstein’s theory isn’t enough. That’s because it treats space and time as smooth and continuous. We know, however, that no material can be chopped into arbitrarily small pieces: eventually, you get down to discrete atoms. Likewise, space itself has a grainy and “quantised” structure – but on a scale a trillion trillion times smaller.

On the largest scale, we may be even further from grasping the full extent of physical reality. The domain that astronomers call “the universe” – the space, extending more than 10 billion light years around us and containing billions of galaxies, each with billions of stars, billions of planets and maybe billions of biospheres – could be an infinitesimal part of the totality. Indeed, the results of our Big Bang could extend so far that somewhere there are assemblages of atoms in all possible configurations and combinations – including replicas of ourselves. Our universe is just one island in a vast cosmic archipelago. On the grandest scale of all, space may have an intricate structure. This scale could be so vast that our purview would be restricted to a tiny fragment: we wouldn’t be directly aware of the big picture, any more than a plankton whose “universe” is a spoonful of water is aware of the entire Earth.

To prove or refute these conjectures – to turn them into firm science – we need to achieve a unified understanding of the very large and the very small – of the cosmos and the quantum. Success, if achieved, would triumphantly conclude an intellectual quest that began with Newton, who unified the force that made the apple fall with the force that held the moon and planets in their orbits.

Such a theory would bring Big Bangs and multiverses within the remit of rigorous science. But it wouldn’t signal the end of discovery. Indeed, it would be irrelevant to the 99 per cent of scientists who are neither particle physicists nor cosmologists, and who are challenged by the baffling complexity of our everyday world. It may seem incongruous that scientists can make confident statements about remote galaxies, or about exotic sub-atomic particles, while being baffled about issues closer to hand – diet and disease, for instance. Yet even the smallest insects embody intricate structures that render them far more mysterious than atoms or stars.

Nearly all scientists are “reductionists” in so far as they think that everything, however complicated, obeys the basic equations of physics. But even if we had a hypercomputer that could solve those equations for (say) breaking waves, migrating birds or human brains, an atomic-level explanation wouldn’t yield the enlightenment we really seek. The brain is an assemblage of cells, and a painting is an assemblage of chemical pigment. But in both cases, what’s important and interesting is the pattern and structure – the emergent complexity.

Will scientists ever fathom all nature’s complexities? Perhaps they will. But we should be open to the possibility that we might, far down the line, encounter limits – hit the buffers – because our brains don’t have enough conceptual grasp. it’s amazing that we can comprehend so much of the counter-intuitive cosmos. But there may be some aspects of reality are intrinsically beyond our ability to grasp them. Just as quantum theory is beyond a monkey’s comprehension, so an understanding of some aspects or reality may have to await the emergence of post-humans.

New Big Questions:

1. How close we are to arriving at a theory that explains these (and other) cosmic mysteries?

2. Can we, as humans, expect ever to understand our cosmos and the complexities within it?

Occam’s Razor, which is a least energy principle dominating our universe of events, states that the best solution to a problem is one which satisfies all of the major points about a problem in the simplest way, without adding too much complexity and too many new hypotheses. With respect to dark matter, cold neutrino gas in vast quantities is the best answer. Dark matter, like neutrinos, cannot be very easily detected, in fact is invisible to most matter. 2nd, a neutrino does not interact with light except by gravity. 3rd, there’s potentially a LOT of it around, as neutrinos are essentially produced in enormous quantities by all stars during hydrogen fusion. 4th, the early universe was probably composed of nearly equal amounts of matter and antimatter. Much of that was destroyed by matter/antimatter interactions, which produced photons in enormous quantities and of course, unimagineable masses/numbers of neutrinos. The usual way of detecting fast moving neutrinos would be Kamiokande’s method using neutrinos interacting with atoms which will emit a characterist flash when neutrinos interact with it. The other detectors work in slightly different but similar ways. But this is not relevant to the issue. Neutrinos cannot form anything but dense masses of gas. How does one detect slow moving neutrinos. Brute force would be by moving a small detector at a significant graction of Cee, the speed of light, thru the gas, which would be the inverse of the usual detector. Another way would be to create a very intense beam of anti-neutrinos and see if they interact with any neutrinos, or vice versa. Another would be to measure the normal solar flux of neutrinos to do the detecting for us, by using detectors which could find them this way also. Another way might be to observe the massive, very ancient globular star clusters, billions of years old, it’d seem, which have probably collected in and around them huge quantities of dark matter/neutrinos, which keep the cluster together. But the money is on neutrino gas collections in huge quantities, literally the “ash” of the universe. &, before some object, there is simply no reason why ALL neutrinos from 15 billion years ago need to be moving really fast. 15 BY is a long enough time for most to slow down, too. & black holes in most galaxies could collect the neutrinos, too. It’s not proven, but it accounts for dark matter which carries the dark energy, without postulating much else at all. Simplest is usually best, and this case, favored. Herb

Question: It is an assumption, that Dark Matter is unseen by our present abilities, due to the possiblity that Dark Matter is able to absorb all the energy that comes in contact with it, and not reflected in a way that would be observable?

The idea that neutrinos surviving from the early universe could form the

dark matter was extensively discussed in the 1980s. There are however

important constraints which now rule this out. The most straightforward is

the following. The number of primordial neutrinos is predictable: it is

related by a simple ratio to the number of photons in the microwave

background (this ratio having been established in the first second of cosic

expansion, when the temperature was above ten billion degrees and all

relevant species were in thermal equilibrium). There would be, on average,

about 100 in every cubic centimeter (outnumbering the baryons by more than

a billion). We can therefore calculate the mass which neutrinos would need

to have if they provided the measured density. It is about a hundred

thousand times less than the electron mass. The problem then is that these

very light particles would still have thermal velocities too high to ‘feel’

the gravity of galaxies and clusters: they would not concentrate in the

gravitational potential wells because they would be moving with much more

than the escape velocity. These arguments would rule out even more firmly

the neutrinos produced in stars and supernovae, because these are more

energetic and would be moving at close to the speed of light. The

observations (and our models for how galaxies form) require the dark matter

to be in much heavier particles – but particles which, like neutrinos, are

neutral and have a very small cross section for interacting with each other

and with ordinary matter.

If the dark matter were to absorb radiation incident on it, then surely we would observe ‘blocking’ of the radiation from distant galaxies lying beyond an intervening cluster – unless the absorbed energy were re-emitted in the same form and in the same direction, in which case the net effect would be zero!

One prospect for dark matter’s consistency is, that it simply does not respond to the particle-wave duality of photons, that it neither gives off nor is moved by photons. A corollary of this then would be that dark matter’s stuff might not be wave-defined either. For instance, an electron moves with extremely short wavelengths because its mass requires it to. So, maybe dark matter doesn’t do that wavelength stuff, it pays no mind to the wave function.

That’s one idea, the idea behind which is that photon-relationship is a highly developed relationship among particles. In this possibility, dark matter would have most of the basic makeup of visible (high-relationship) matter, but lack the polishing aspect of photon-class sensitivity.

I’m not sure I fully understand your proposal, so I doubt this response

will be very helpful. Just a brief comment, however: It may indeed

eventually turn our that the dark matter is a manifestation of some

as-yet-unenvisioned new physical principle. However, I think the best

methodology is to seek an explanation within the constraints of our current

framework of ideas — and only go outside this framework when/if we’re

convinced that there isn’t a more conventional explanation. I’d bet that

the explanation will be found within that framework. But it’s a bet I’d be

happy to lose, because it would be immensely gratifying if cosmology had

indeed revealed yet more fundamentally-new features of physical reality .

1. The author writes, “But in 1998, cosmologists had a big surprise. The expansion of the universe is not slowing down at all, but speeding up.” This sentence reminds of cycles, speeding up, then slowing down, although apparently the sentence refers not to cycles, but to an early error in finding that the expansion was slowing down. When working with gravity from a speculative source in the midst of a universe of great movement, and speaking of a time spans of billions of years, how do we know that we are not measuring, taking photos, calculating, modeling from a tiny spot in the middle of a great cycle? In another million or billion years, all could change in another part of the cycle.

Could this have a parallel in global warming? I once heard a talk by a scientist who spoke of the earth having large temperature cycles with a frequency of some 800 years, warm then cool, warm then cool…. In 1970 some scientists looked for the earth to cool. By 1990, most seemed to think the earth would warm. Now after some 16 years of no warming, some scientists are rethinking. Within cycles, there can be great variation. Or there can be no cycling, just random or chaotic changes from the time that we sit in.

2. In discussion question 2, what is meant by “’environmental’ rather than ‘fundamental’ science”? I thought that cosmology was about ordering of the universe(s). Is part of that ordering “environmental” ordering?

It is my understanding that the gravitational force of the galaxy on the earth, for example, is related to the mass of the galaxy within the earth’s orbit and that the net gravitational force of the galaxy outside the earth’s orbit is zero. Is that correct? If it’s true, why does the gravity of the dark matter outside a sun’s orbit affect its velocity?

Let me address the three points you make. First, as regards ‘cycles’, we observe objects whose light set out one, two, three, ……. and up to twelve billion years ago. We know that for the first half of that time (roughly) the expansion was decelerating; thereafter, the density was so low that the so-called ‘dark energy’ dominates. The simplest long-range forecast is that this acceleration will continue for ever. But of course we can’t rule out the possibility that in the far future the ‘dark energy’ reverses its sign and causes a recollapse to a ‘big crunch’. But the timescales we’re talking about here are tens of billions of years. And I should add that we have good evidence that the expansion is isotropic (on scales larger than clusters of galaxies – i.e on scales more than about 0.003 of the Hubble radius) and that it’s happening sufficiently uniformly that an observer on any other galaxy would see a similar isotropic expansion.

Regarding your analogy with the climate, the change in scientific opinion from a concern about cooling to a concern about warming reflected simply a growth in our understanding – not a change in the real situation. Nonetheless there are of course genuine cycles in the climate (the Milankovich effects, on timescales of tens of thousands of years, which cause ice ages, plus longer-term effects detectable in the geological record). But of course the rapid rise in carbon dioxide due to burning of fossil fuels is an extra ‘forcing’ effect which has no real precedent in the Earth’s earlier history.

By distinguishing ‘fundamental’ and ‘environmental’ cosmology, I had in mind that there cosmologists address two kind of questions: (i) what are the key fundamental numbers that define the universe? and (ii) how did a ‘simple’ beginning lead, after more than 10 billion years, to the immense complexity of galaxies, stars, nebulae, planets, etc? The latter questions are now being addressed by elaborate computer simulations, rather like those used by meterologists in weather prediction.

Thank you for the reply, though I am no astronomer and I don’t fully understand the reply. I guess you say that it doesn’t matter whether today we ourselves are in a particular part of a cycle that we are measuring from, that we can determine phenomenal cycling independently of our position.

The reply’s last paragraph’s mention of “elaborate computer simulations” brought to mind a question. I’ve done some computer modeling, though nothing approaching the elaborate scale you speak of. I wonder about the robustness, or fragility, of such grand modeling, which reminds of a concern with deductive argumentation. If we have a 3 step syllogism, and the 2 premises are firm, we have confidence in the conclusion. But how about a 20-step argument with 19 deductive steps before reaching the conclusion? Or a 100-step argument? It seems that our confidence in the reliability of the conclusion drops, the larger or longer the argument becomes, even if each step appears sound. Elaborate modeling by definition must be very complex, perhaps combining data and results and methods and other models from other cosmologists or meteorologists from different countries of different languages with different understandings and so on. Given a set of physical realities, a model can be manipulated until it sort of fits the facts or the available data. As the elaborateness of computer modeling grows, how is its reliability, its usefulness, affected?

You are right that only the material within the Sun’s orbit around the

galaxy affects the Sun’s speed — shells of material at larger radii have

no effect. Actually the dark matter isn”t very important in the inner part

of the Galaxy. However, the dark matter (a swarm if invisible but massive

particles) extends far further out. This is inferred from observations that

outlying stars in the Galaxy are moving faster than they would if they were

only ‘feeling’ the gravitational pul of stars and gas. Whereas the visible

Galaxy is a disc with a fairly sharp edge, the dark matter is in a more

spherical distribution, and extends much further out, its density falling

off more slowly with distance than the density of stars.

Many thanks for this second comment. All I would say in response is that

there is indeed always a risk that elaborate computer simulations will be

misleading. Those doing the computations are usually careful to check the

stability of their model to small changes, and to check also that the

results don’t depend on the mesh size. It’s better still if different

people, using quite different codes, attack the same problem and get

similar results. And of course (as in models for weather prediction, etc)

it’s important not only to do the computation correctly but to ensure that

as much as possible of the relevant physics is included — and included

properly.. I would add that our understanding of many cosmic phenomena —

black holes, supernovae, star formation, etc — has been hugely enhanced by

the availability of more powerful computers and cleverer codes. Astronomers

can’t do ‘real’ experiments, so experiments in the ‘virtual universes’ in

our computers are specially valuable in developing our understanding and

insight.